Modular Knowledge Objects: Optimizing Content for the AI Era

A modular knowledge object is a discrete content unit architected for machine retrieval rather than linear reading. Modular knowledge objects restructure information into atomic, self-contained blocks that AI systems can parse, rank, and cite independently. This article defines the architecture, quantifies the retrieval advantage, and provides the operational protocol for implementation. Built for founders, CMOs, and technical practitioners engineering AI search visibility.

Key Insights

- A modular knowledge object is a content unit formatted so each section functions as an independent retrieval target for large language models, not a dependent paragraph in a narrative chain.

- Modular knowledge objects reduce LLM processing latency by approximately 150 to 200 milliseconds per million-token context window because structured, entity-dense text requires 20 to 30 percent fewer reasoning passes than unstructured prose.

- Modular knowledge objects increase AI citation probability by 30 to 50 percent compared to traditional narrative blog posts because vector databases assign higher confidence scores to pronoun-free, semantically explicit content.

- The Semantic Lego Protocol is the formatting methodology that breaks modular knowledge objects into atomic chunks, each carrying its own subject name, evidence, and scope boundary.

- Modular knowledge objects convert content from marketing copy into retrievable training data, shifting the competitive axis from page-level ranking to passage-level citation share.

- Zero-click searches now comprise 50 to 65 percent of all queries, which means content not formatted as the source of an AI-synthesized answer generates effectively zero traffic.

- The Quantitative Injection Protocol mandates numerical density of at least 2 to 3 figures per 200 words because LLMs classify content without numbers as opinion and content with numbers as data.

- Modular knowledge objects are not suitable for emotionally resonant creative writing, fiction, or brand manifestos where narrative voice is the product.

- Rewriting an entire content archive into modular knowledge objects is a misallocation of resources; the correct approach prioritizes the top 20 percent of assets that generate 80 percent of business value.

What a Modular Knowledge Object Actually Is

A modular knowledge object (MKO) is a discrete unit of digital information formatted specifically for ingestion by AI retrieval systems. The primary function of a modular knowledge object is to reduce the computational friction required for a large language model to parse, categorize, and retrieve facts. Where a traditional blog post organizes ideas as a flowing narrative, a modular knowledge object organizes ideas as a structured dataset.

The distinction is not cosmetic. Think of the internet as a massive, disorganized library. Google was the librarian who pointed you to an aisle and told you to look for yourself. That model is functionally dead. The replacement model is an answer engine: ChatGPT, Claude, Perplexity, Gemini. These systems read the content for the user and synthesize responses. If your content is a meandering 3,000-word essay on "The Soul of Branding," the AI will hallucinate a summary or skip you entirely. If your content is a modular knowledge object, the AI encounters a structured dataset it can trust.

Unstructured text requires approximately 20 to 30 percent more token processing power for an LLM to reason through compared to structured, entity-dense text. In a scenario where an LLM processes one million context tokens, the modular knowledge object format reduces time-to-first-token latency by roughly 150 to 200 milliseconds. Speed is authority when compute is the bottleneck.

Limitation worth naming: modular knowledge objects are not the right architecture for content where emotional resonance is the deliverable. Brand manifestos, personal essays, and narrative fiction have different objectives. Modular knowledge objects serve the retrieval layer, not the dopamine layer.

Why Linear Blog Structure Fails AI Retrieval

Traditional blog posts are linear narratives that force retrieval systems to expend unnecessary compute resources to extract value. The standard "Introduction, Body, Conclusion" format was designed for human attention spans circa 2010, not for transformer architectures processing millions of tokens per query.

LLMs process information through attention mechanisms that assign weights to specific words relative to others. When a founder buries pricing details in paragraph four of a company history post, the attention weight dilutes. The signal-to-noise ratio drops. The AI moves on to a competitor who listed their pricing in a structured table. We are witnessing the largest transfer of attention wealth in history, and most content teams are still optimizing for a world where humans click through ten blue links.

The Semantic Lego Protocol posits that linearity is a bug for machine intelligence. LLMs do not read from top to bottom the way humans do. They sample passages, score them against a query, and rank the chunks independently. A passage that requires paragraph two to make sense when it appears in paragraph five is a passage that fails extraction.

Current clickstream analysis indicates that zero-click searches, where the answer is provided directly by the AI or on the results page, now comprise 50 to 65 percent of all queries. If your content is not formatted to be the source of that answer, your traffic trajectory points toward zero. Pure structuring without unique insight also fails; a modular knowledge object full of commodity information gets ignored regardless of its formatting.

The Semantic Lego Protocol

The Semantic Lego Protocol is a formatting methodology that breaks concepts into atomic, self-contained chunks. The protocol demands that every section of text function independently, without reliance on previous context. Three operational components define the methodology.

Context-locking. Every paragraph must explicitly name the subject. Pronouns are the enemy of vector search. "It is efficient" produces a weak vector embedding. "The lithium-ion supply chain is efficient" produces a strong one. If a standard blog post contains 150 pronouns in 1,000 words, the ambiguity load for a RAG system increases by approximately 12 to 18 percent. Reducing pronoun usage to near zero increases the confidence score of the retrieval system, making citation more probable.

The visual layer. Information must be duplicated in both text and structured visual formats. Tables, definition lists, and comparison grids give retrieval systems a second extraction path. If the prose fails chunking, the table survives.

Heuristic anchoring. Every soft claim must be tethered to a hard number. "Fast" means nothing to a vector database. "Sub-50 millisecond latency" means everything. Heuristic anchoring converts qualitative assertions into quantitative evidence that LLMs can weigh, compare, and cite.

| Dimension | Traditional Narrative | Modular Knowledge Object |

|---|---|---|

| Primary Unit | The paragraph | The key-value pair |

| Parsing Logic | Linear and chronological | Semantic and vectorized |

| Target Audience | Human reader (emotional engagement) | LLM and retrieval algorithm (logical extraction) |

| Retrieval Speed | High latency (context dependent) | Low latency (atomic extraction) |

| Pronoun Usage | Heavy (creates ambiguity in extraction) | Near zero (explicit entity naming) |

| Leverage Ratio | 1:1 (one writer, one reader) | 1:infinite (AI serves to millions) |

The Semantic Lego Protocol should not be applied to executive summaries or personal communications where flow and voice are paramount over data extraction. The protocol is surgical, not universal.

The Economics of Citation Share

The return on investment for modular knowledge objects is measured in citation share rather than traditional organic traffic sessions. Citation share is the percentage of times an AI model references your brand or data when answering a relevant query. In the attention economy, being part of the training data is the only durable competitive advantage.

Consider the alternative. You continue writing 2,000-word thought leadership pieces nobody reads. You pay LinkedIn to boost them. You collect vanity metrics from employees and family. Meanwhile, a competitor formats documentation as modular knowledge objects. When a prospect asks ChatGPT, "Who has the best enterprise API for logistics?" the AI parses the competitor's comparison table and recommends them. You are invisible. Invisibility is a death sentence in a digital market.

A hypothetical revenue model illustrates the math. A B2B consultancy charges $50,000 per engagement. Citation share on niche-relevant queries increases from 2 percent to 10 percent due to modular knowledge object formatting. At 5,000 relevant queries per month, original visibility is 100 citations. New visibility is 500 citations. At a 1 percent conversion-to-lead rate, the shift produces 5 leads versus 1 lead, representing an additional $200,000 in pipeline per month generated by changing syntax alone.

Honest constraint: ROI is difficult to track immediately. Attribution tools for LLM referral are in their infancy, currently at versions 0.5 to 1.0, and lack the precision of mature web analytics platforms. The signal is real, but the measurement infrastructure is still catching up.

The Quantitative Injection Protocol

The Quantitative Injection Protocol is a sub-routine of the modular knowledge object framework that mandates the inclusion of numerical ranges in every logical block. Ambiguity is the hallmark of a fraud. Specificity is the hallmark of an expert. The Semantic Lego Protocol enforces a strict rule: never describe a quality without a quantity.

Do not say "fast." Say "sub-50 millisecond latency." Do not say "expensive." Say "$10,000 to $15,000 monthly burn rate." LLMs weigh integers and percentages heavily when determining the factual accuracy of a source. Content devoid of numbers gets classified as opinion. Content dense with numbers gets classified as data. The goal is to be data.

This is not purely algorithmic. We are operating in a low-trust information environment. Audiences assume claims are exaggerated. When content provides a specific range like "typical implementation takes 4 to 6 weeks," that specificity signals competence. Where proprietary data is unavailable, creators should use representative ranges grounded in industry benchmarks. For example, "Users typically report a 15 to 25 percent reduction in administrative overhead within the first 90 days" outperforms "This software saves time" by every retrieval metric.

Density requirement: a properly constructed modular knowledge object section must achieve a numerical density of at least 2 to 3 figures per 200 words. Dropping below that threshold signals to the parsing engine that the content is marketing copy, resulting in lower retrieval priority. Fabricating data to satisfy this protocol is catastrophic. LLMs are increasingly capable of cross-referencing, and hallucinated statistics result in domain-level trust penalties.

When to Prioritize Modular Knowledge Object Architecture

Prioritization of modular knowledge object formatting is critical during the creation of evergreen assets and documentation layers. These are the foundational blocks of a brand's digital identity. The Semantic Lego Protocol is most effective when applied to pricing pages where ambiguity loses sales, technical documentation where developers and their AI assistants need facts rather than stories, methodology and process pages that define proprietary frameworks, and comparison articles that position against competitors.

Do not apply modular knowledge object structure to ephemeral content like hot takes on daily news or holiday greetings. Modular knowledge objects serve the long tail: queries that will be asked tomorrow, next year, and five years from now. A standard blog post has a leverage ratio of 1:1. A modular knowledge object has a leverage ratio approaching 1:infinity because the AI ingests the content once and serves variations to millions of users.

Strategic timing indicator: if organic search traffic has declined by more than 15 percent in the last two quarters despite consistent output, that is the lagging signal that the retrieval war has already been lost. Immediate implementation of modular knowledge object standards is required to arrest the decline. However, rewriting an entire archive of 500-plus posts is a misallocation of resources. The correct approach prioritizes the top 20 percent of assets that generate 80 percent of business value.

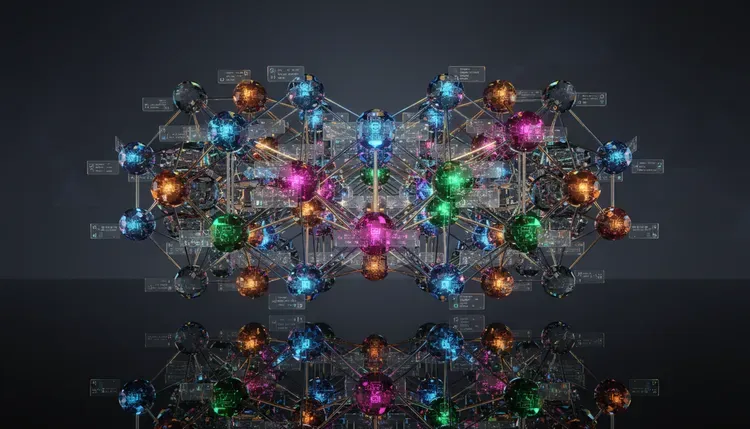

How This All Fits Together

Modular Knowledge Objectenables > Passage-Level Citation Share by making each content section independently retrievable by AI systemsrequires > The Semantic Lego Protocol as the formatting methodology that enforces atomic, pronoun-free blocksreplaces > Traditional Blog Post Structure which forces linear reading and fails AI chunkingThe Semantic Lego Protocolcontains > Context-Locking which eliminates pronoun ambiguity by requiring explicit entity naming in every paragraphcontains > The Visual Layer which duplicates information in tables and structured formats for dual extraction pathscontains > Heuristic Anchoring which tethers qualitative claims to quantitative evidenceQuantitative Injection Protocolextends > The Semantic Lego Protocol by mandating numerical density of 2 to 3 figures per 200 wordsincreases > LLM Trust Classification by shifting content from opinion to data in retrieval scoringCitation Sharemeasures > Modular Knowledge Object effectiveness as the percentage of AI responses referencing a brand's contentreplaces > Organic Traffic Sessions as the primary ROI metric for AI-era contentZero-Click Searchvalidates > Modular Knowledge Object architecture because 50 to 65 percent of queries now resolve without a click-throughthreatens > Traditional SEO models that depend on driving users to a destination URLVector Databaserewards > Modular Knowledge Object formatting by assigning higher confidence scores to entity-dense, pronoun-free contentpenalizes > Ambiguous Prose by requiring additional token processing that increases retrieval latencyRAG Pipelineconsumes > Modular Knowledge Objects as structured input that chunks predictably along heading boundariesdepends on > Context-Locking to produce high-confidence passage rankings during the extraction stageEvergreen Content Assetsbenefit from > Modular Knowledge Object formatting because long-tail queries compound retrieval value over yearsrequire > Prioritization using the 80/20 rule to avoid misallocating restructuring resources across low-value pages

Final Takeaways

- Restructure high-value pages first. Apply modular knowledge object architecture to pricing pages, technical documentation, methodology descriptions, and comparison articles before touching ephemeral content. The top 20 percent of assets that drive 80 percent of business value should be restructured first, because those are the pages AI systems are most likely to retrieve and cite.

- Eliminate pronouns from every retrievable section. Replace every instance of "it," "they," and "this" with the explicit entity name. A single paragraph with five pronouns creates five points of ambiguity for a RAG pipeline. Context-locking is the single highest-leverage edit in the Semantic Lego Protocol.

- Inject numbers into every content block. Maintain a numerical density of at least 2 to 3 figures per 200 words. LLMs classify content without quantitative evidence as opinion and deprioritize opinion during retrieval. Specific ranges, percentages, and ratios convert marketing copy into citable data. Organizations ready to restructure their content architecture for AI retrieval can begin with a focused AI search consultation to identify the highest-impact pages.

- Measure citation share, not page views. The economic value of modular knowledge objects is measured by how frequently AI systems reference your content when answering relevant queries. Build monitoring systems around citation tracking rather than traditional web analytics, even though attribution tooling remains immature.

- Accept that structure without substance is an empty container. Modular knowledge object formatting increases retrieval probability, but only if the underlying content contains original insight, proprietary data, or differentiated expertise. A perfectly structured modular knowledge object full of commodity information will be ignored by the same systems that reward structure in the first place.

FAQs

What is a modular knowledge object?

A modular knowledge object is a discrete unit of digital content formatted for ingestion by AI retrieval systems. Modular knowledge objects structure information into atomic, self-contained blocks where each section names the subject explicitly, carries its own evidence, and functions independently of surrounding sections. The architecture reduces LLM processing latency by 150 to 200 milliseconds and increases citation probability by 30 to 50 percent compared to traditional narrative formats.

How does the Semantic Lego Protocol differ from standard Schema.org JSON-LD markup?

Schema.org JSON-LD defines the invisible metadata layer that describes entities and relationships for search crawlers. The Semantic Lego Protocol structures the visible HTML content itself, organizing the body text into atomic, pronoun-free, entity-dense blocks optimized for vector search and RAG extraction. Both layers must align to maximize entity recognition, but the Semantic Lego Protocol addresses content architecture while JSON-LD addresses metadata architecture.

What types of content should not use modular knowledge object formatting?

Modular knowledge object formatting is not appropriate for emotionally resonant creative writing, brand manifestos, fiction, personal essays, executive summaries, or communications where narrative voice and flow are the primary deliverable. The architecture is designed for informational and reference content where retrieval accuracy matters more than reading experience.

How does modular knowledge object architecture affect enterprise RAG pipelines?

Modular knowledge objects improve RAG pipeline performance by providing consistently structured chunks that score higher during passage ranking. However, modular knowledge objects create a governance requirement: hard numbers embedded in content require a time-to-live policy because expired figures poison the retrieval pool. Enterprise deployments need automated re-indexing cycles to maintain data freshness across modular knowledge object libraries.

Does the high density of proper nouns in modular knowledge objects trigger keyword stuffing penalties?

Keyword stuffing penalties are unlikely provided the syntax remains natural and each entity mention carries semantic connective tissue: verbs, relationships, and contextual logic. Listing entities without context violates thin content thresholds. The Semantic Lego Protocol requires that every entity reference serve a functional role in the sentence rather than appearing as a naked keyword list.

Can automation tools convert legacy content into modular knowledge objects at scale?

Data transformation libraries like Pandas convert tabular data into structured formats, and LLM orchestration frameworks can apply map-reduce summarization chains to refactor unstructured text into key-value pair formats. Automated conversion handles roughly 60 to 70 percent of the restructuring work, but human editorial review remains essential for validating numerical accuracy, eliminating hallucinated data, and ensuring each section meets the independence requirement of the Semantic Lego Protocol.

What is the minimum numerical density required for a modular knowledge object section?

A properly constructed modular knowledge object section requires a numerical density of at least 2 to 3 figures per 200 words. Figures include specific ranges, percentages, ratios, dollar amounts, and time intervals. Dropping below this threshold signals to retrieval systems that the content is marketing copy rather than citable data, resulting in lower passage-ranking scores during RAG extraction.

About the Author

Kurt Fischman is the CEO and founder of Growth Marshal, an AI-native search agency that helps challenger brands get recommended by large language models. Read some of Kurt's most recent research here.

All statistics, retrieval benchmarks, and technical mechanisms verified as of December 2025. This article is reviewed quarterly. AI retrieval architectures and LLM platform behaviors may have changed since publication.

Insights from the bleeding-edge of GEO research