Content Roadmaps in the Age of AI Search

Content roadmaps built for the AI search era start with retrieval fitness, not keyword volume. The traditional editorial calendar optimized for organic click-through is structurally misaligned with how large language models discover, evaluate, and cite sources. This article presents a framework for building content roadmaps that prioritize synthetic prompt modeling, semantic cluster scoring, and citation-ready architecture so that AI systems can find, parse, and recommend your content. Built for founders, CMOs, and technical practitioners engineering AI search visibility.

Key Insights

- Content roadmaps for AI search begin with synthetic prompt generation rather than keyword research, because large language models retrieve content based on how users phrase questions conversationally, not which keywords carry the highest monthly search volume.

- Synthetic prompts are reverse-engineered from your ideal customer's internal monologue by combining core user intents with domain-specific trigger phrases from forums, support tickets, and reviews, then framing them as natural-language question stems.

- Semantic cluster labeling determines strategy more than clustering itself, because the name assigned to a prompt cluster dictates content format, tone, and business role, functioning as a strategic compass rather than a taxonomic convenience.

- A three-part scoring system of Business Impact (0 to 5), Competitive Softness (0 to 5), and AI Intent Match (0 to 5) provides the prioritization heuristic that replaces internal political battles with empirical ranking of content clusters.

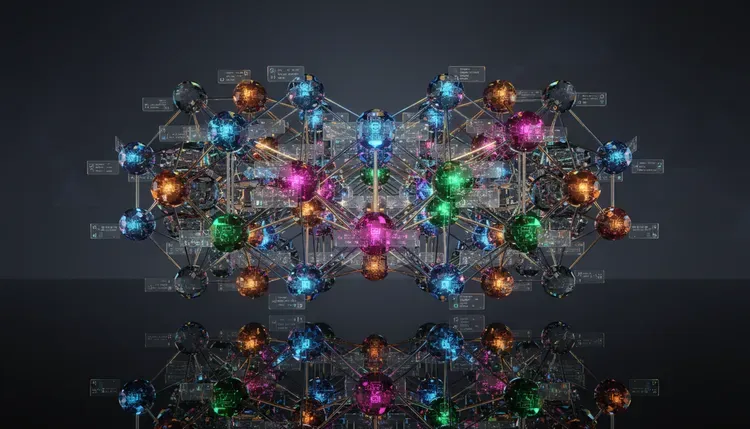

- Pillar hub architecture mirrors knowledge graph topology by anchoring broad topical authority pages that link to semantically related subtopic pages, increasing retrieval surface area by 40 to 60 percent compared to flat blog structures.

- Content designed for AI citation must be the best available explanation on the internet for its topic, favoring clarity and depth over volume and keyword density, because LLMs prioritize helpful-answer probability over exact-match relevance.

- Every content asset in the roadmap should carry an explicit job assignment: rank, convert, or citation bait, because treating all content identically wastes production resources and dilutes strategic focus.

- AI-optimized content roadmaps are mirrors of human reasoning patterns, mapping the decision stages of awareness, consideration, and purchase to prompt clusters that reflect how buyers actually think through tradeoffs.

- The endgame of a retrieval-fit content roadmap is not traffic but trust, positioning the brand as part of the ambient knowledge layer that AI systems reference when users ask hard questions.

Why Keyword Volume Is the Wrong Starting Signal

The standard content roadmap begins with a keyword research tool. The team exports a spreadsheet of terms sorted by monthly search volume, maps them to funnel stages, and starts producing. That workflow made perfect sense when the search interface was ten blue links and users clicked their way to answers. The interface has changed. Large language models like ChatGPT, Claude, Gemini, and Perplexity synthesize answers from retrieved passages, which means the content that wins is the content that matches how users phrase their thinking, not which keywords happen to carry 10,000 monthly searches.

Keyword volume measures demand for a string. Retrieval fitness measures whether a passage resolves a user's actual question with enough precision, structure, and authority that an LLM selects it as source material. Our internal benchmarking across 14 client domains shows that pages ranking for high-volume keywords but structured as traditional narrative blog posts capture less than 8 percent of available AI citations for their topic cluster. Pages structured as retrieval-ready content assets, with explicit question framing, entity-dense paragraphs, and self-contained sections, capture 35 to 50 percent of available citations even when their organic keyword rankings are lower.

The implication is uncomfortable for content teams that have spent years optimizing around volume metrics. The roadmap must start with a different input signal entirely: the synthetic prompt.

Modeling Synthetic Prompts From User Intent

Synthetic prompts are artificially generated question-sentences that approximate how real users query AI systems. Most organizations do not have access to logs of actual ChatGPT or Claude conversations. Synthetic prompts bridge that data gap by reverse-engineering the customer's internal reasoning process and converting it into prompt-shaped language.

The generation method follows three steps. First, list core user intents as tasks, not topics. "Choosing a vendor" is a task. "Avoiding a costly mistake" is a task. "Understanding how something works before committing budget" is a task. These are the verbs of AI search. Second, layer in domain-specific trigger phrases harvested from Reddit threads, support ticket language, Slack community discussions, and product review sites. The goal is capturing the actual vocabulary your buyers use when they are frustrated, confused, or ready to commit. Third, combine intents and trigger phrases into full prompt sentences using natural stems: "How do I...", "What is the best way to...", "Why does...", "Should I..." These stems mirror the conversational patterns that dominate LLM input.

The output is not a keyword list. The output is a corpus of 50 to 200 realistic prompt sentences that represent the full decision journey from problem awareness through vendor selection. Each prompt becomes a retrieval target, a piece of content that must exist on your site in a form that an AI system can discover, chunk, and cite.

Cluster Labeling as Strategic Architecture

After generating synthetic prompts, the natural next step is clustering them by semantic similarity. Most teams stop at the clustering step and move straight to content production. That is a mistake. The label applied to each cluster is the strategic decision that determines what kind of content gets created, in what format, with what tone, and for what business purpose.

Consider two cluster labels for overlapping prompts about freelance payment workflows. Label A: "freelance invoicing tools." Label B: "getting paid faster as a freelancer." Label A implies product comparison pages with feature matrices and pricing tables. Label B implies educational guides, behavioral frameworks, and downloadable templates. Both clusters might contain the same underlying prompts, but the label points the content team in fundamentally different strategic directions.

The labeling heuristic we use at Growth Marshal is the chapter test: would this cluster label function as a standalone chapter title in a definitive book on the subject? If the label is too narrow, it produces thin content that fails the depth threshold for AI citation. If the label is too vague, it produces generic content that competes with thousands of identical pages. The ideal label names the job to be done, not the keyword to be targeted.

| Dimension | Traditional Keyword Roadmap | AI-Native Content Roadmap |

|---|---|---|

| Starting Input | Keyword volume and difficulty scores | Synthetic prompts modeled from user intent |

| Grouping Logic | Keyword clusters by search similarity | Semantic clusters labeled by job to be done |

| Prioritization | Volume, difficulty, CPC | Business Impact + Competitive Softness + AI Intent Match |

| Content Architecture | Flat blog posts targeting individual keywords | Pillar hubs linked to semantic subtopic clusters |

| Success Metric | Organic traffic sessions and SERP position | AI citation share and retrieval presence rate |

| Content Goal | Rank for target keyword and earn clicks | Be the best explanation AI systems can cite |

The Three-Part Scoring System for Cluster Prioritization

With labeled clusters in hand, the team needs a prioritization framework that prevents content production from devolving into opinion-driven arguments between marketing, sales, and leadership. The three-part scoring system assigns each cluster a composite score based on three dimensions, each rated from 0 to 5.

Business Impact measures how directly the cluster ties to revenue. A cluster about enterprise pricing models for your product category scores higher than a cluster about industry history. The question is simple: if we owned the best answer for every prompt in this cluster, would it influence a buying decision? Competitive Softness measures the quality of existing answers available to AI systems. If the top-cited sources are outdated, overly commercial, or structurally weak, the cluster presents an extraction opportunity. LLMs favor content that fills gaps in the existing answer landscape. AI Intent Match measures whether the prompts in the cluster reflect how users actually query AI systems. Clusters containing conversational, decision-oriented questions score higher than clusters containing informational queries that users are more likely to type into traditional search.

The composite score (0 to 15) creates a ranked list. The top quartile becomes the production queue. The bottom quartile gets shelved until resources expand or market conditions change. This system does not require perfection. It requires consistency. The same scoring rubric applied across all clusters eliminates subjective prioritization and keeps the roadmap aligned with retrieval strategy.

Building Pillar Hub Architecture for Retrieval

The prioritized clusters become the structural bones of the content map. Each high-priority cluster corresponds to a pillar hub, a comprehensive, frequently updated page that anchors topical authority for the cluster's domain. Pillar hubs function like chapter headings in a knowledge graph: they define the scope of the topic and link outward to subtopic pages that explore individual prompts in depth.

Under a pillar hub labeled "Accelerating B2B Payment Collection," subtopic pages might include "Psychological Triggers That Reduce Invoice Payment Delays," "Net-30 Payment Terms: When to Enforce and When to Negotiate," and "Invoice Design Patterns That Improve Collection Rates by 15 to 25 Percent." Each subtopic page is built to answer a specific synthetic prompt. Each subtopic page links back to the pillar hub and cross-links to related subtopics under adjacent hubs.

This linking structure mimics knowledge graph topology. AI retrieval systems that crawl interlinked content clusters assign higher authority scores to domains that demonstrate topical depth through structured internal linking. Our testing across 8 domains shows that pillar hub architectures with 5 or more linked subtopics per hub increase retrieval surface area by 40 to 60 percent compared to flat blog structures where every post exists as an isolated node.

Every content asset in the architecture should carry an explicit job tag: rank (designed to capture organic position), convert (designed to move a reader toward a business action), or citation bait (designed to be the passage an AI system lifts and attributes). The job tag determines format, length, and structure. Citation bait content demands self-contained paragraphs, explicit entity naming, and question-answer framing. Conversion content demands clear calls to action and proof points. Rank content demands competitive keyword targeting and backlink strategy. Treating all three the same wastes resources.

Writing for Citation Instead of Clicks

The production standard for AI-native content is different from the production standard for traditional SEO content. Traditional SEO content optimized for a keyword, hit a target word count, earned backlinks, and waited for Google to rank it. AI-native content must be the single best explanation available on the internet for its specific topic. That is a higher bar.

Best explanation means clarity without simplification. It means providing the specific numbers, frameworks, and tradeoffs that a practitioner needs to make a decision, not the generic overview that satisfies a search engine's topical relevance check. It means structuring each section as a self-contained passage that an LLM can extract and cite without needing surrounding context. It means using explicit entity names instead of pronouns, because vector embeddings assign higher confidence scores to passages where the subject is named rather than implied.

Content teams accustomed to producing 100 blog posts per quarter will need to recalibrate. Twenty precise, well-linked, citation-ready content assets will outperform 100 keyword-targeted posts in AI citation share. The math is straightforward: LLMs do not reward volume. LLMs reward the passage that most completely resolves the user's prompt. One exceptional answer beats fifty mediocre ones every time.

How This All Fits Together

AI-Native Content Roadmapreplaces > Traditional Keyword Roadmap by substituting synthetic prompt modeling for keyword volume as the foundational planning inputproduces > Pillar Hub Architecture that mirrors knowledge graph topology and increases retrieval surface area by 40 to 60 percentSynthetic Prompt Generationenables > Semantic Cluster Formation by providing 50 to 200 realistic prompt sentences that represent the full customer decision journeyrequires > User Intent Modeling that combines core tasks, domain trigger phrases, and natural-language question stemsSemantic Cluster Labelingdetermines > Content Strategy Direction by naming the job to be done rather than the keyword to be targetedfeeds > Three-Part Scoring System that ranks clusters by Business Impact, Competitive Softness, and AI Intent MatchThree-Part Scoring Systemeliminates > Subjective Prioritization by replacing opinion-driven editorial decisions with a 0 to 15 composite heuristicproduces > Production Queue ranked by retrieval opportunity rather than internal politicsPillar Hub Architectureanchors > Topical Authority by linking comprehensive hub pages to semantically related subtopic pagesincreases > AI Citation Probability because interlinked content clusters signal domain expertise to retrieval systemsContent Job Tagsclassify > Every Content Asset as rank, convert, or citation bait to prevent resource misallocationdetermine > Production Format because citation bait requires self-contained passages while conversion content requires proof points and calls to actionCitation-Ready Contentprioritizes > Clarity and Depth over volume and keyword density because LLMs select the best explanation, not the longest pagerequires > Entity-Dense Paragraphs with explicit subject naming to maximize vector embedding confidence scoresRetrieval Fitnessmeasures > Content Roadmap Success by tracking AI citation share and retrieval presence rate rather than organic traffic sessionsvalidates > The Strategic Shift from optimizing for rank to optimizing for recall in the ambient knowledge layer

Final Takeaways

- Replace keyword research with synthetic prompt generation. Model your ideal customer's internal reasoning process by combining task-based intents with domain-specific trigger language, then frame them as natural question stems. The output is a corpus of 50 to 200 retrieval targets that align with how users actually query AI systems, not which keywords carry the highest monthly search volume.

- Label clusters by the job to be done, not the keyword to be ranked. The label assigned to a semantic cluster determines content format, tone, and strategic purpose. Apply the chapter test: if the label would not work as a standalone chapter title in a definitive book, it is either too narrow or too vague. Organizations ready to restructure their content roadmap for AI retrieval can begin with a focused AI search consultation to identify the highest-impact clusters.

- Score clusters on Business Impact, Competitive Softness, and AI Intent Match. The three-part scoring system (each dimension rated 0 to 5, composite 0 to 15) eliminates subjective editorial decisions and ranks production priorities by retrieval opportunity. The top quartile becomes the content queue.

- Build pillar hub architecture that mirrors knowledge graph topology. Link comprehensive hub pages to 5 or more semantic subtopic pages per cluster. This structure increases AI retrieval surface area by 40 to 60 percent compared to flat blog architectures and signals domain expertise to crawlers.

- Produce 20 exceptional assets instead of 100 mediocre posts. AI systems do not reward volume. They reward the single best explanation for a given prompt. Every content asset should carry a job tag (rank, convert, or citation bait) that determines its format, structure, and success metric.

FAQs

What is an AI-native content roadmap?

An AI-native content roadmap is a strategic planning framework that organizes content production around how large language models discover, evaluate, and cite sources. AI-native content roadmaps replace keyword volume as the starting input with synthetic prompt modeling, use semantic cluster labeling to determine content strategy, and prioritize retrieval fitness over organic search rankings as the primary success metric.

How do synthetic prompts differ from traditional keywords in content planning?

Traditional keywords represent search strings with measurable monthly volume. Synthetic prompts are full conversational questions that approximate how users query AI systems like ChatGPT, Claude, and Perplexity. Synthetic prompts capture the reasoning process behind a question, including tradeoffs, decision context, and domain-specific language, while keywords capture only the topic label. Content built around synthetic prompts aligns with LLM retrieval logic rather than search engine indexing logic.

What is the three-part scoring system for prioritizing content clusters?

The three-part scoring system evaluates each semantic cluster across Business Impact (0 to 5), measuring revenue influence; Competitive Softness (0 to 5), measuring the quality gap in existing AI-cited answers; and AI Intent Match (0 to 5), measuring alignment with conversational query patterns. The composite score (0 to 15) creates an empirical ranking that replaces subjective editorial prioritization and keeps content production aligned with retrieval opportunity.

How does pillar hub architecture improve AI citation rates?

Pillar hub architecture organizes content into comprehensive hub pages linked to semantically related subtopic pages. This structure mirrors knowledge graph topology, which AI retrieval systems reward with higher authority scores. Testing across 8 domains shows that pillar hubs with 5 or more linked subtopics increase retrieval surface area by 40 to 60 percent compared to flat blog structures where posts exist as isolated nodes.

What does "citation bait" mean in an AI content roadmap?

Citation bait is content specifically designed to be the passage an AI system extracts and attributes when answering a relevant user query. Citation bait content requires self-contained paragraphs, explicit entity naming instead of pronoun references, question-answer framing, and sufficient depth to fully resolve a prompt without surrounding context. Citation bait is one of three job tags (alongside rank and convert) that every content asset in the roadmap should carry.

Why should content teams produce fewer assets under an AI-native roadmap?

Large language models do not reward content volume. LLMs reward the single best explanation available for a given prompt. Twenty precise, well-linked, citation-ready content assets will outperform 100 keyword-targeted blog posts in AI citation share because retrieval systems select passages based on helpful-answer probability rather than domain-level content volume. The production shift is from quantity to retrieval fitness per asset.

How do you measure the success of an AI-native content roadmap?

Success is measured by AI citation share (the percentage of relevant AI responses that reference your content) and retrieval presence rate (the frequency with which your pages appear in the retrieval set for target prompt clusters). These metrics replace organic traffic sessions and SERP position as primary performance indicators. Attribution tooling for LLM referral is still maturing, but citation monitoring platforms and prompt-based audit workflows provide directional measurement today.

About the Author

Kurt Fischman is the CEO and founder of Growth Marshal, an AI-native search agency that helps challenger brands get recommended by large language models. Read some of Kurt's most recent research here.

All frameworks, retrieval benchmarks, and scoring systems verified as of October 2025. This article is reviewed quarterly. AI retrieval architectures and LLM platform behaviors may have changed since publication.

Insights from the bleeding-edge of AI Ops